It seems like everyone is doing AI-assisted development these days. For a lot of people, it feels like magic: you ask for a thing, and suddenly you have that thing, even if you have no idea how to make that thing yourself. Sometimes that’s exactly what you need. Other times it’s like a half-finished addition attached to your house with drywall screws and optimism.

Here’s the mental model I recommend to make the right decisions about using AI to assist development:

The human developer is the general contractor; AI is their crew of subcontractors.

If you’ve ever done work without a good general contractor, you already know why having an experienced one matters.

The IKEA Chair vs. The House

Even if you’ve never built anything before, you can still put together a chair from Ikea. The parts are pre-cut, the instructions are simple, and if you mess up, the worst outcome is you swear a little and redo a step. That’s vibe-coding at its best: quick, scrappy, exploratory. There’s a place for that, for sure.

But if you’ve never built a house before and you try to “learn by building” with no plan, you’ll do things in the wrong order, make irreversible mistakes, and end up redoing work constantly. I once did a bunch of drywall work to save money. When I called in the experts to mud and tape it, the result was so bad they had to do extra work to fix it and it ended up being more expensive than just hiring it all out in the first place.

The same dynamic shows up in software. Research shows that AI-assisted coding goes fast but sometimes creates rework that eats up all those gains. Prompt until it compiles, prompt until the tests pass (if there even are tests), prompt until the demo works. That approach is fine for small scripts, hobby projects, spike solutions, or throwaway prototypes. But if you’re building a real production system with real users, real security concerns, and a future you have to live in, vibe-coding without experienced oversight isn’t bold. It’s expensive. You just don’t get the invoice until later. Security vulnerabilities are the most expensive version of that deferred invoice; a hobbyist app that accidentally exposes user data isn’t just technical debt, it’s a serious liability

The General Contractor Model

A general contractor doesn’t usually swing the hammer much themselves. They aren’t framing, wiring, plumbing, or roofing the house. Their real job is higher-level, just like an AI-orchestrating developer. They:

- Translate requirements into a plan

- Sequence work to avoid rework

- Set guardrails so people don’t do the wrong things

- Keep the site and project organized

- Inspect what gets built before it’s too late to change

- Manage change orders from the customer

- Deliver a finished house, not one that just looks okay from a distance

AI is a powerful crew of subcontractors. But subcontractors don’t replace the general contractor. They make a good GC faster than they’d be working alone. A bad GC with more subs just creates chaos at a faster rate.

Where the Analogy Holds Up

Here are a few ways this metaphor maps cleanly onto your team’s approach to AI-assisted development.

Sequencing

If you do things out of order, you get rework. Lots of it. In software, sequencing shows up as introducing seams before refactoring, agreeing on contracts before wiring integrations, setting up CI and linting before accelerating output, and planning data migrations before piling features on top.

AI will happily help you do the wrong step extremely quickly. The GC mindset is: “What’s the right next step so we don’t pay twice?”

Remodel vs. New Construction

AI shines on greenfield projects because there are fewer hidden constraints. You’re building new walls, not discovering mystery wiring behind old ones.

Large legacy codebases are remodels. They have inconsistent patterns, tribal knowledge, leaky abstractions, and scary corners that only exist because of production reality. Context size matters top. The model can’t “see” the whole codebase at once, unlike the developer who holds a mental map of the system.

So the GC move is to reduce how much the AI has to guess about, just like you would with a subcontractor: give tight scopes, provide the relevant interfaces and constraints, anchor changes in tests and contracts, and work in smaller, bounded slices.

One Trade at a Time

A roofer is not your electrician. Stop asking the model to “implement the feature, refactor the architecture, improve performance, and make it secure” in a single prompt. Instead, treat the model like a trade:

- “Generate a migration plan and the SQL scripts.”

- “Write tests that cover these edge cases.”

- “Implement this function behind this interface with these constraints.”

- “Review this PR against our security checklist.”

- “Propose two approaches and list tradeoffs.”

One task. One clear definition of done.

Change Orders and Scope Creep

Construction deadlines get wrecked by endless “small tweaks.” Software does too, especially when AI is involved. Every time you say “actually also…” in a prompt, you’re issuing a change order. That’s not inherently bad, but an experienced GC knows to re-baseline the plan when scope shifts. Vibe-coding just keeps hammering and three “small tweaks” later, the model has quietly restructured something it shouldn’t have touched.

Where the Analogy Breaks Down

No metaphor is perfect, and it’s worth being honest about the gaps. Software is far more malleable than a building. You can undo mistakes relatively cheaply sometimes, reverting a commit is easier than un-pouring concrete. And unlike physical construction, you can spin up an identical copy of your “building” in seconds for testing.

The metaphor also understates how collaborative the relationship with AI can be. A subcontractor doesn’t help you think about the design, but AI genuinely can. Using it to explore approaches, pressure-test ideas, and draft alternatives is one of its highest-value uses, and that doesn’t map neatly onto the GC/sub relationship.

Still, the core insight holds: someone needs to be directing the work, sequencing it, and inspecting the results. That job doesn’t go away just because the tools got faster.

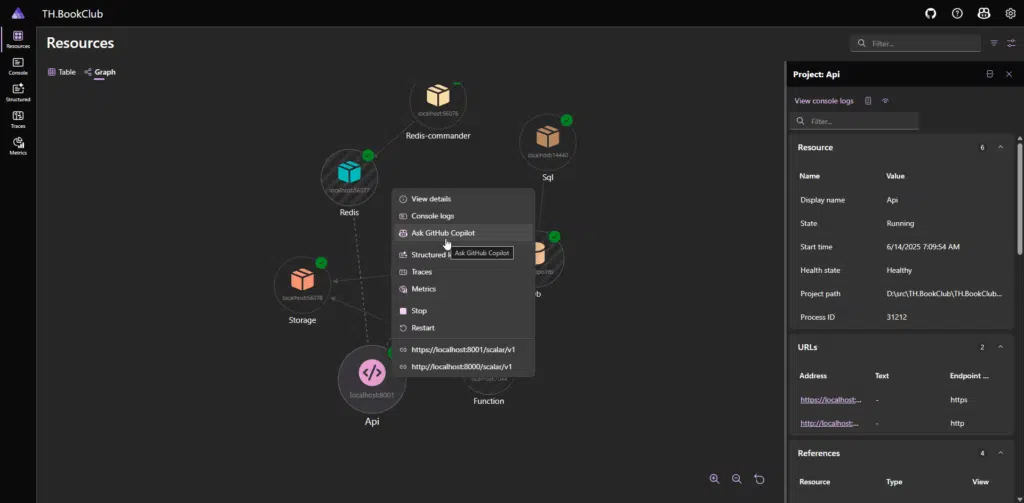

What This Actually Looks Like: A Walkthrough

Everything above is the theory. Here’s what it looks like in practice. I’ll walk through a single, realistic task: adding a new API endpoint to an existing service. This is the way I might actually approach it with AI as my subcontractor.

The task: our team needs a new POST /api/orders/{id}/refund endpoint on an existing .NET order management service. It needs to validate the order is eligible for a refund, process it through our payment provider, update the order status, and emit a domain event.

Before I Open the AI: Scoping the Work

I don’t start by prompting. I start by writing a short spec for myself: a few sentences I’d write on a sticky note if I were handing this to a teammate. This takes maybe five minutes:

What success looks like: A new endpoint that processes refunds for eligible orders, integrates with Stripe, updates order state, and emits an

OrderRefundedevent. Behind a feature flag. Covered by unit and integration tests.Constraints: Follow our existing CQRS pattern (command handler + validator). Use the

IPaymentGatewayabstraction; don’t call Stripe directly. Orders can only be refunded if they’re inCompletedstatus and less than 30 days old.Non-goals: No partial refunds yet. No UI changes. No changes to the existing order state machine beyond adding the

Refundedstatus.

This is my blueprint. Everything I ask the AI to do will trace back to it. If I can’t articulate what “done” looks like, I’m not ready to delegate, I’m just hoping.

Step 1: Lay the Foundation Before Building Walls

The vibe-coding version of this task would be a single prompt: “Add a refund endpoint to my order service.” The AI would produce something plausible, probably with a dozen decisions baked in that I didn’t make and may not agree with.

Instead, I sequence the work the way a GC would schedule trades. Electricians before drywall. Foundation before framing.

My first prompt isn’t about the endpoint at all. It’s about the test:

“Here’s our

ProcessOrderCommandhandler and its unit tests [pasted]. Write unit tests for a newRefundOrderCommandhandler with these rules: the order must be inCompletedstatus, must be less than 30 days old, and must not have been previously refunded. Test each validation rule independently. Follow the same test patterns and naming conventions you see in the existing tests. Don’t write the handler yet, just the tests.”

Why tests first? Because they force me to think through the behavior before the implementation exists, they give the AI an unambiguous target to hit when I ask it to write the handler, and they give me a way to verify the next step actually works. I’m laying foundation.

I review the tests. The AI nailed the pattern matching but missed a case: what happens if the order doesn’t exist at all? I ask it to add that test. Two minutes of review saved me from discovering a missing null check in production.

Step 2: Build the Walls

Now I ask for the handler, and the prompt is tight because I’ve already set up the guardrails:

“Now write the

RefundOrderCommandHandler. It should pass all the tests you just wrote. Use our existingIPaymentGatewayinterface for the actual refund call. Here’s the interface [pasted]. Add aRefundedstatus to theOrderStatusenum. Emit anOrderRefundedEventusing our existingIDomainEventPublisher. Here’s that interface [pasted]. Don’t add any new dependencies or packages.”

Notice what I’m doing: I’m giving it the interfaces it needs to code against, telling it what it can’t change, and pointing it at the tests it needs to pass. This is a bounded work order. One trade, one job, clear definition of done.

The output comes back. I read it. All of it! It looks clean, follows the pattern. But I notice it’s catching a generic Exception from the payment gateway instead of the specific PaymentFailedException our gateway throws. Small thing. Easy to miss if you’re skimming. I ask it to fix that specific issue.

Step 3: Wire It Up

My next prompt is the API controller and route:

“Write the API endpoint

POST /api/orders/{id}/refundthat dispatches theRefundOrderCommand. Follow the same pattern as our existingCompleteOrderController[pasted]. Include the feature flag check using ourIFeatureFlagService. The flag name should beenable-order-refunds. Return 404 if the order isn’t found, 409 if it’s not eligible, and 200 with the updated order on success.”

Again, this is one job, the existing patterns are provided, and specific status codes are defined. The AI doesn’t have to guess at any of this.

Step 4: Inspection

The code is written. Now I inspect it and I use the AI to help me do it.

“Review the

RefundOrderCommandHandlerfor potential issues: thread safety, error handling gaps, missing validation, and anything that violates the patterns in the existing handler I showed you earlier. Be specific.”

It flags something I hadn’t considered: there’s no idempotency check. If the endpoint gets called twice, it’ll try to refund twice through Stripe. Good catch. I ask it to add an idempotency guard and a test for it.

I run the full test suite. I run our linter. I check that CI is green.

Step 5: The Punch List

The feature works. But “works” isn’t necessarily “shippable.” I have a few more prompts:

“Write a brief runbook entry for the refund endpoint: what it does, how to enable/disable the feature flag, what to check if refunds start failing, and how to manually verify a refund in Stripe’s dashboard.”

“Add structured logging to the handler. Log when a refund is initiated, when it succeeds, and when it fails, including the order ID and the reason for failure. Follow our existing logging patterns [pasted].”

Docs, observability, operational readiness. The punch list is unglamorous but it’s the difference between “it works on my machine” and “we can support this in production at 2 AM.”

What Just Happened

The whole process might have been six or seven prompts across an hour. A vibe-coded version might have been one prompt and fifteen minutes. But look at what the sequenced approach produced: tests that were written before the code, a handler that follows existing patterns and passes those tests, an endpoint behind a feature flag, an idempotency guard I might have missed entirely, structured logging and a runbook, and a clean PR that a reviewer can actually follow.

Every prompt was one job, scoped tightly, with the context the AI needed and nothing more. I wasn’t typing more code, I was directing the work and inspecting the results. That’s the general contractor model in practice.

Experience Is the Multiplier

If you hire a less-experienced general contractor who has only done carpentry, they might be talented and hardworking and still run the project poorly. They won’t know what order things need to happen in, where hidden risks live, what steps you can’t skip, or which shortcuts create future disasters.

It’s the same with AI and less-experienced developers. AI can amplify inexperience. A junior developer with AI might produce more code than ever before, but “more code” was never the goal. You want correct outcomes, properly integrated, with a future you can maintain.

An experienced developer using AI looks like a wizard, not because they type faster, but because they know which questions to ask, which steps to sequence first, and which output to reject. The AI didn’t give them that judgment. It just gave them leverage to apply it faster.

Bring It Home

If you’re building IKEA furniture software, like a proof-of-concept or a throwaway prototype, vibe-code away. Optimize for speed and learning. But if you’re building a house you have to live in, be the general contractor. Plan the work. Scope it tightly. Set guardrails. Inspect everything. And remember that AI is the fastest crew you’ve ever had, but a crew without a contractor just builds you an expensive mess, faster.

The developers who thrive in this era won’t be the ones who prompt the fastest. They’ll be the ones who direct the work the best.